Ukraine War: 1,300,000 Russian Dead

Tagged:MathInTheNews

/

Politics

/

Sadness

/

Statistics

Every day in Ukraine has been bad for the last 4+ years, but today we’ve passed a new milestone.

The Update from Ukraine

As incorrigibly persistent readers of this Crummy Little Blog That Nobody Reads (CLBTNR) know, we have been expressing… strongly opinions… about Russia’s invasion of Ukraine. [1] [2] [3] [4] [5] [6] [7] [8] [9] [10] [11] [12] [13] [14] [15] [16] [17]

The short version: we disapprove.

We’ve been doing some quantitative modeling of the casualty rates (and, at least initially all the other Russian losses). Lately, we’ve been updating every 100,000 (!) Russian deaths. As you can see below, Russia has passed another grim milestone of human sacrifice of their own citizens: 1.3 million dead, in combat operations in Ukraine alone.

We’ve previously defended our use of the Ukrainian Ministry of Defence as a data source;

read previous posts for details. Suffice to say: they seem reasonable by comparison with

other sources, and I don’t care to argue about it.

We’ve previously defended our use of the Ukrainian Ministry of Defence as a data source;

read previous posts for details. Suffice to say: they seem reasonable by comparison with

other sources, and I don’t care to argue about it.

Reminder About Russian Demographics

Let’s remind ourselves of the hole being blown in the middle of the Russian demographic of military age males, e.g., about 18-44 years old. Take:

- an estimate of the Russian population,

- divide by 2 to get the men (I’m sure there are Russian women who are soldiers, but I never hear about them),

- then take roughly the middle third as credibly military age, depending on how coercive the Russian government wants to get.

So now our 1.3 million dead is $100\% \times 1.3\ \mbox{million} / 24\ \mbox{million} = 5.42\%$ of the military-age male population, now likely dead in Ukraine.

The Russian emigration and COVID-19 deaths (see older post [15]: 900,000 men of military age emigrated and about 67,000 dead of COVID-19), costs about an extra million, for a total loss of 2.3 million. That’s $100\% \times 2.3\ \mbox{million} / 24\ \mbox{million}$, for a total loss about 9.58% of the Russian men of military service age.

That’s getting on towards 10% of the relevant demographic!

These men are gone:

- They will never return to work in the Russian economy.

- Their children will either grow up without fathers or never be born at all.

- Many women in the middle demographic of Russia will face the impossibility of finding husbands.

This is the sort of thing that starts revolutions. (Though Russia is famous for tolerating suffering among its people, for cultural reasons that are inscrutable to me.)

These are truly horrible times.

Our Segmented Regression Model

Our “segmented” regression model is basically a linear model of the number of soldiers killed versus time, except that it can have a kink in it. As we explained previously, this means the number of soldiers looks like:

\[\mbox{Soldiers}_t = \beta_0 + \beta_1 \mbox{DayNum}_t + \beta_2 \theta(\mbox{DayNum}_t - \psi) (\mbox{DayNum}_t - \psi) + \epsilon_t\]where:

- $t$ indexes data points in time (here measured in days since 2023-Jan-22; the war itself started earlier, but this is when we started paying quantitative attention),

- $\psi$ is a parameter for estimating the day number at which the kink occurs, and

- $\theta()$ is the Heaviside step function (0 for negative argument, 1 for positive argument, here used as an indicator for “after day number $\psi$”, i.e., the fancy-pants modeling language for an “if” statement),

- $\epsilon \sim N(0, \sigma^2_{\mbox{Soldiers}|\mbox{DayNum}})$ is the error usual error term, normally distributed around 0 with the conditional variance shown.

If we consider expectation values, the interpretation is immediately obvious for the mean number of casualties at a given time:

\[E[\mbox{Soldiers}] = \left\{ \begin{align*} \beta_0 + \beta_1 \mbox{DayNum}, & \mbox{ if } \mbox{DayNum} \lt \psi \\ \beta_0^* + (\beta_1 + \beta_2) \mbox{DayNum}, & \mbox{ if } \mbox{Daynum} \ge \psi \end{align*} \right.\]Here:

- $\beta_1$ is the slope before the kink,

- $\beta_1 + \beta_2$ is the slope after the kink, and

- $\beta_0$ is the slope before the kink & $\beta_0^*$ is the post-kink intercept (depending on the slope difference and the location of the kink in a way about which we generally do not care, for most purposes).

The 2 slopes $\beta_0$ and $\beta_0 + \beta_1$ are the things about which we care most: did the rate of killing Russian soldiers actually go up after the kink?

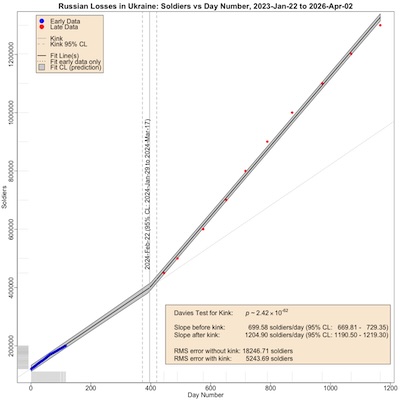

Here’s what the result looks like, in a plot annotated with lots of delicious statistics:

Statistical Significance & The Need for a Kink

Let’s start with reading the wheat-colored box in the lower right of the plot.

The first item is the Davies test, which tests – essentially – the statistical significance of the difference between before-kink and after-kink slopes. If this gives a large $p$-value, then we realize the true model could be kink-free and what we see is due to chance alone. If this gives a very small $p$-value, then we accept that the kink is worthwhile.

As you can see, $p \sim 10^{-62}$ is absurdly statistically significant: we should very much believe the kink is real, and a necessary part of our model.

At the bottom of the wheat-colored box at the bottom right is a confirmation of this, comparing the errors of the straight line and kinked line models. This is the root-mean-square (RMS) error, measuring how each data point deviates from the fitted model. Note that the RMS error of the kinked model is 3.48 times lower than the simple straight-line model. So we again have evidence that the kinked model does a better job of explaining the data.

The Killing Rates Before & After the Kink

Next consider the middle lines in that wheat-colored box at the bottom right.

These tell us the slopes of the 2 lines, or the killing rates, before and after the kink. Ukraine went from killing about 5000/day to killing about 18,000/day. That’s a tremendous increase, percentage-wise:

\[100\% \times \frac{1204.90 - 699.58}{699.58} = 72.23\%\](Last time, we got around 76%, so this tracks nicely with the previous result.)

We know from the Davies Test that this is statistically significant, but we can confirm it here. Just look at the 95% confidence limits on the slope estimates: they are not just disjoint, but far apart. This means there is almost no chance that the slopes are actually equal, and we just saw them be different by chance.

The Plotted Data

Now we’re ready to look at the plot!

- Axes:

- The horizontal axis is time, measured in days from 2023-Jan-22 to 2026-Apr-02.

- The vertical axis is number of Russian soldiers reported killed by the Ukrainian MoD.

- Data points:

- The blue points in the lower left are the initial training data, 116 days from 2023-Jan-22 to 2023-May-17. They established the initial linear model.

- The red points in the upper right are more sparsely collected data points from 2024-Apr-10 to 2026-Apr-02. They were collected at approximately every 100,000 increase in casualties (with one exception at 450k).

- Kink position: The vertical gray line indicates where the fitting procedure estimates the kink in the line should be, in this case at 2024-Feb-22. The vertical gray dashed lines on either side indicate the 95% confidence limits, i.e., we’re 95% certain the kink is between 2024-Jan-29 and 2024-Mar-17. (Previously, we’d estimated the kink at 2024-Mar-08, so this is pretty consistent with that.)

- Fitted lines:

- The black line is the kinked line that we fit, with a kink at 2024-Feb-22. The gray bands around it are the 95% prediction limits, i.e., if you were to use the line to predict a new data point, you’d be 95% certain it would be in the gray band. (I’m not super confident about these confidence limits! The segmented fit seems to be telling me something to which I’m not accustomed; suffice to say the fit is really good.)

- The dotted line that continues on straight at the kink shows where the original model would have gone, had we not introduced the kink. Obviously, reality is much more lethal to Russian soldiers than the initial model predicted.

The difference between the original straight line (lower left + dotted line continuing past the kink) and the segmented line is visually brutal confirmation that the kinked model is better. This reinforces what we learned above with the Davies test (yes, the kink is likely real) and the reduction in RMS error from 18,000 soldiers to 5,000 soldiers (yes, the kinked model is much closer to the data).

3-Fold Crossvalidation: Is This Real?

The thing that keeps statisticians up at night is worry about overfitting: a more complex model will always fit a bit better, but does it predict better, and is the extra complexity justified?

For example, I could put a kink at every data point. It would perfectly fit any dataset whatsoever, even random data! But… it would be absolute trash at making predictions. The model would be doing little more than memorizing the training data.

That’s why we invented crossvalidation. In this example, we split the dataset into 3 parts called folds, fairly sampling the before-kink and after-kink points in each fold. Then we train the model on 2 of the folds, and measure its prediction qualities on the 3rd fold that it hadn’t seen in training.

If all that looks good (prediction on data subsets never seen during training), then we train one more time on the whole dataset.

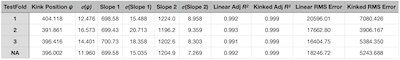

This table shows the results of 3-fold crossvalidation (first three rows) and the final

training run on the whole dataset (last row).

This table shows the results of 3-fold crossvalidation (first three rows) and the final

training run on the whole dataset (last row).

- The parameters estimated by the model in each fold are the kink position, slope 1, and slope 2. The thing to take away from the table is that they are pretty stable across all 3 folds of crossvalidation: we’re getting a consistent story on all folds.

- The standard deviations of those parameters are also reported, as an estimate of our uncertainty about the estimates. These are also stable, meaning our uncertainty is consistent across all folds.

- Looking at the adjusted $R^2$ for the simple linear model and the kinked model, we see that in all cases the kinked model did better. But, to be fair, not by much: there wasn’t that much room for improvement anyway (most of the data is in the before-kink dataset, and both models get that right).

- The real test of cross validation is the RMS error on the prediction set, the data not seen during training. In all cases, the RMS error of the kinked model is 3-4x lower than the simple linear model.

This means, in essence, the kinked model is performing well on out-of-sample data testing, and is superior to the simple linear model. We should believe the kinked model.

Everything required to peer review the above analysis is available for your inspection. [18]

The Weekend Conclusion

Something around 2024-Feb-22, the Ukraine war changed in a way that was much more lethal to Russian soldiers (again, as reported by the Ukrainian MoD). I don’t know what that is. It could be a jump in Ukrainian competence at/commitment to drone warfare, or it could be Russians resorting to human wave attacks, or it could be something else. I’m too lazy to work my way through the word salad of war reporting to try to figure it out; all I can document is that it is real.

It’s hard to explain how angry this makes me. Not just at the stupid cruelty of Russia, but at the lukewarm support Ukraine initially got from the West and now the equally stupid responses of Trump. The most frustrating thing for me, as an American, is that other Americans are not angry enough about Trump, and might blow off voting or acquiesce to ICE brutality and voter suppression. That makes us complicit in many of these evils.

Jack Hardy was one of my favorite folk artists. (“Was”, alas, because he is no longer among us.) He was the éminence grise behind many figures in the New York City folk scene, some of whom under his tutelage became quite famous (e.g., Suzanne Vega).

How do I even begin to ‘explain’ a guy whose trademark was inscrutability? He just knew mountains of literature, poetry, and mythology and sang songs that invited you on a stroll through all of it.

His song “Ask Questions”, in a video of a performance shown here, showcases his breadth of knowledge, allusive & cryptic style where each song is almost a riddle, and of course his famous gravelly voice. You didn’t go listen to Jack for operatic purity, you went to have the boundaries of your mind tugged open a bit.

He died before Trump I, but he knew about our responsibilities when living under an evil system. The relevant chorus:

History has eyes, history has ears.

History finds secrets that are buried for years.

Exploded, explained, exposed, and explicit:

History will judge us either stupid or complicit.

And we know… we are not stupid.

Ask questions.

Don’t be complicit. Don’t be stupid. Break the evil, wring it out of the system and replace it with something better.

Even if Democrats win, complacency will be the major sin toward which they will be tempted. Resist even that.

(Ceterum censeo, Trump incarceranda est!)

Notes & References

1: Weekend Editor, “Another Grim Anniversary”, Some Weekend Reading blog, 2023-Mar-02. ↩

2: Weekend Editor, “Do the Ukrainian Reports of Russian Casualties Make Sense?”, Some Weekend Reading blog, 2023-Apr-15. ↩

3: Weekend Editor, “Update: Ukrainian Estimates of Russian Casualties”, Some Weekend Reading blog, 2023-May-01. ↩

4: Weekend Editor, “Updated Update: Ukrainian Estimates of Russian Casualties”, Some Weekend Reading blog, 2023-May-09. ↩

5: Weekend Editor, “Updated${}^3$: Ukrainian Estimates of Russian Casualties Hit 200k”, Some Weekend Reading blog, 2023-May-17. ↩

6: Weekend Editor, “Ukraine Invasion: 250k Russian Dead”, Some Weekend Reading blog, 2023-May-17. ↩

7: Weekend Editor, “Tacitus in Ukraine”, Some Weekend Reading blog, 2023-May-25. ↩

8: Weekend Editor, “Casualties in Ukraine: Grief Piles Higher & Deeper”, Some Weekend Reading blog, 2024-Apr-10. ↩

9: Weekend Editor, “Post-Memorial Day Thought: 500k Russian Dead in Ukraine “, Some Weekend Reading blog, 2024-May-31. ↩

10: Weekend Editor, “Ukraine: 600k Russian Dead”, Some Weekend Reading blog, 2024-Aug-27. ↩

11: Weekend Editor, “Ukraine War: 700k Russian Dead”, Some Weekend Reading blog, 2024-Nov-04. ↩

12: Weekend Editor, “Ukraine War: 800k Russian Dead”, Some Weekend Reading blog, 2025-Jan-08. ↩

13: Weekend Editor, “Ukraine War: 900k Russian Dead”, Some Weekend Reading blog, 2025-Mar-21. ↩

14: Weekend Editor, “Ukraine War: 1,000,000 Russian Dead?!”, Some Weekend Reading blog, 2025-Jun-12. ↩

15: Weekend Editor, “Ukraine War: 1,100,000 Russian Dead”, Some Weekend Reading blog, 2025-Sep-22. ↩

16: Weekend Editor, “Ukraine War: 1,200,000 Russian Dead”, Some Weekend Reading blog, 2025-Dec-31. ↩

17: Weekend Editor, “Ukraine War Casualties: A Segmented Regression Model”, Some Weekend Reading blog, 2026-Jan-28. ↩

18: Weekend Editor, “Updated R script for analysis of Russian Casualties in Ukraine”, Some Weekend Reading blog, 2026-Apr-02.

The data driving the original analysis, in .tsv format, is also available for review by the persistent skeptic.

The data driving this analysis, in .tsv format, is also available for review.

There is also a transcript of running the script, for your review.

This whole mess depends on some idiosyncratic subroutine libraries of our own

construction, graphics-tools and pipeline-tools. We are happy to supply these to our most

exceptionally persistent skeptics who wish to reproduce these results. :-) ↩

Gestae Commentaria

Comments for this post are closed pending repair of the comment system, but the Email/Twitter/Mastodon icons at page-top always work.