The Weekend Editrix Exposed to COVID-19: An Adventure with Bayes Rule in Medical Testing

Tagged:COVID

/

PharmaAndBiotech

/

Statistics

Last weekend, the Weekend Editrix was exposed to a person who tested positive for COVID-19. The need for rapid testing suddenly became very real for us. While waiting for the test to work, we worked out the Bayesian stats for the test: a positive test means near-100% chance of COVID-19, while a negative test means 89.4% chance of no COVID-19.

What’s the sitch?

We are members of a religious community.

For most of 2020, meetings were quickly transitioned to Zoom, like everything else. Some things worked surprisingly well, and others… not so much. Humans are to some degree social creatures, and in a religious context we often crave the emotions associated with social contact.

So once vaccines were rolled out sufficiently well, we reconvened in person — though vaccinated, masked, socially distanced, and with hand sanitizer everywhere. We also reported (respecting medical privacy) any COVID-19 contacts that might have happened, so people would know when to test. That seemed to work pretty well.

But we learned this afternoon from our religious community that the Weekend Editrix was exposed last weekend. (Your humble Weekend Editor, being laid up with a back injury, participated via Zoom. Any exposure to me would be through the Weekend Editrix.) Suddenly, we were very interested in the availability, price, speed, and accuracy of home COVID-19 test kits, to decide what to do next. This is especially so since the Weekend Editrix works with a social service agency that visits elder care facilities, and we absolutely do not want to inject COVID-19 there!

Rapid antigen test kits

Fortunately, a quick call to our local pharmacy revealed they had several kinds of test

kits. But… about 30min later when we arrived, they had only 1 kind of test kit and

only 3 of them: the ACON Laboratories Flowflex COVID-19 Antigen Home Test kit, authorized by the FDA

on October 4th. [1]

Fortunately, a quick call to our local pharmacy revealed they had several kinds of test

kits. But… about 30min later when we arrived, they had only 1 kind of test kit and

only 3 of them: the ACON Laboratories Flowflex COVID-19 Antigen Home Test kit, authorized by the FDA

on October 4th. [1]

Here’s what the FDA said about approving this test:

This action highlights our continued commitment to increasing the availability of appropriately accurate and reliable OTC tests to meet public health needs and increase access to testing for consumers.

“Accurate” means it tells you the truth; “reliable” means it keeps telling you the truth if you test over and over again. Sounds good to me.

It was frustrating that the pharmacy phone call claimed abundance and diversity of tests, but very quickly that situation turned into just a few of exactly 1 kind of test. And, of course this being the United States, they were not free. Limited variety, limited availability, and then only if you can pay.

With a sigh, we paid. It wasn’t a lot at all by our standards, but if we had been poor, or students, or just really desperate, it could have been bad. Especially with therapeutics like molnupiravir and paxlovid coming that only work in early days after symptoms: it will be crucial to have testing be universally available and free. We’re not there yet.

The test

Fortunately, the test was easy enough to operate that even a couple of older PhDs could do it without too much problem. After swabbing the Weekend Editrix’s nose, we used the buffer solution to extract the antigens into solution. We put the 4 required drops of loaded buffer into the sample chamber, and watched the sample strip gradually turn pink as the goop diffused along.

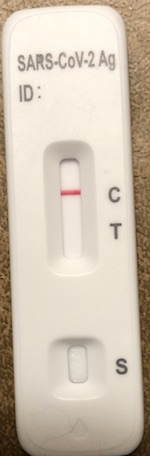

The readout is kind of interesting: there are 2 red bars that might appear, labelled “C” and “T” (photo below; spoiler alert).

- The C bar stands for “control”: it indicates whether the test is working, and must always show up or the test is broken. (If C doesn’t show up, you have to try again with another test kit.)

- The T bar stands for “test”: if it shows up, even faintly, then you’re likely infected. If it doesn’t show up, even faintly, then you’re likely not infected.

I wonder how much we should trust that; how much work is the word “likely” doing there? We had 15 minutes to think it over, while the test did its stuff.

So I read the box insert on the test. (Hey, sometimes reading the manual is The Right Thing, no?)

- It’s described as having a low False Positive Rate (FPR) by which most people understand: if it comes up positive you’ve almost certainly got COVID-19.

- It’s also said to have a somewhat higher False Negative Rate (FNR), by which most people understand: if it comes up negative then you might be in the clear, but there’s some chance you’re not.

“Most people understand” incorrectly.

As a cranky, grizzled old statistician this bothered me. Let’s work out the details while we’re waiting for the test, shall we?

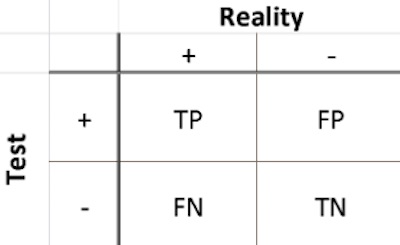

For a binary test like this, there are 2 things going on:

- Reality: you either have COVID-19 (+) or you don’t (-).

- Test: the test either comes up positive (+) or negative (-).

These are not the same! The test can lie to you, hopefully with small probability. If you run the test on $N$ people, you come up with people divided among 4 cases:

- True Positives: $TP$ of them who have COVID-19 and test positive.

- True Negatives: $TN$ of them who do not have COVID-19 and test negative.

- False Positives: $FP$ of them who do not have COVID-19 but the test lies and gives a positive anyway.

- False Negatives: $FN$ of them wo do have COVID-19 but the test lies and gives a negative anyway.

Obviously that’s all the cases:

\[N = TP + TN + FP + FN\]I mean, it’s just 4 integers. How hard can it be? (Never say this.)

These can be arranged in a table, as shown here. The test result (+/- for the test

readout) is shown on the rows, but the unknown truth of the matter is shown on the columns

(+/- for having COVID-19 or not). Obviously, you’d like that table to be diagonal: as near

as you can get, $FN = 0$ and $FP = 0$ so that the test always tells you the truth.

These can be arranged in a table, as shown here. The test result (+/- for the test

readout) is shown on the rows, but the unknown truth of the matter is shown on the columns

(+/- for having COVID-19 or not). Obviously, you’d like that table to be diagonal: as near

as you can get, $FN = 0$ and $FP = 0$ so that the test always tells you the truth.

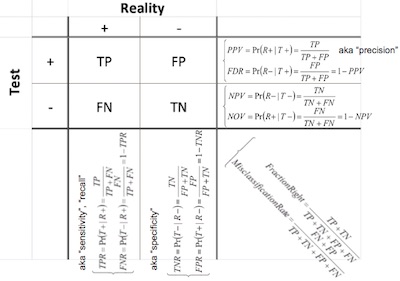

If you’re the developer of the test, you try to engineer that. In fact, you try very hard! You run the test on samples of known COVID-19 status, and measure the Bayesian probability of the test lying either way, called the False Positive Rate and the False Negative Rate:

\[\begin{alignat*}{4} \mbox{FPR} &= \Pr(\mbox{Test+} | \mbox{Reality-}) &&= \frac{FP}{FP + TN} \\ \mbox{FNR} &= \Pr(\mbox{Test-} | \mbox{Reality+}) &&= \frac{FN}{FN + TP} \end{alignat*}\]Usually people keep those 2 types of error separated, since there are different consequences of a false positive (somebody gets treated for a disease they don’t have, which is bad) and a false negative (somebody doesn’t get treated for a disease they do have, which is really bad). But if you wanted to, you could just lump them together into the stuff you get right and the stuff you get wrong (usually called the Misclassification Rate):

\[\begin{align*} \mbox{Fraction Right} &= \frac{TP + TN}{TP + TN + FP + FN} \\ \mbox{Misclassification Rate} &= \frac{FP + FN}{TP + TN + FP + FN} \end{align*}\]So the developers at ACON Laboratories fiddled about with the test, trying to minimize the $\mbox{FNR}$ and $\mbox{FPR}$. Good for them. They did it well enough that the FDA approved their test last October. (Sheesh, why so long? More than a year and a half into a global pandemic?!)

But I’m not the test developer: I don’t care about optimizing their assay. I want to know if my spouse has COVID-19 or not! For that, we have other measures, some of which are the Bayesian duals of the above. Here are the 4 cases:

- Positive Predictive Value (PPV): If the test comes up positive, what’s the probability you have COVID-19?

- Negative Predictive Value (NPV): If the test comes up negative, what’s the probability you do not have COVID-19?

- False Discovery Rate (FDR): If the test comes up positive, what’s the probability the test lied and you’re actually still ok and do not have COVID-19? This is the Bayesian dual of the False Positive Rate above.

- Negative Overlooked Value (NOV): If the test comes up negative, what’s the probability the test lied and you really do have COVID-19? This is the Bayesian dual of the False Negative Rate above.

We can annotate our little 2x2 table to show those as well, and you can see all the

different ways to quantify error and correctness of a binary test. That’s what’s shown

here (click to embiggen).

We can annotate our little 2x2 table to show those as well, and you can see all the

different ways to quantify error and correctness of a binary test. That’s what’s shown

here (click to embiggen).

How about some concrete numbers? The package insert for the test said [2]:

Q: HOW ACCURATE IS THIS TEST?

A: The performance of Flowflex COVID-19 Antigen Home Test was established in an allcomers clinical study conducted between March 2021 and May 2021 with 172 nasal swabs self-collected or pair-collected by another study participant from 108 individual symptomatic patients (within 7 days of onset) suspected of COVID-19 and 64 asymptomatic patients. All subjects were screened for the presence or absence of COVID-19 symptoms within two weeks of study enrollment. The Flowflex COVID-19 Antigen Home Test was compared to an FDA authorized molecular SARS-CoV-2 test. The Flowflex COVID-19 Antigen Home Test correctly identified 93% of positive specimens and 100% of negative specimens.

So we know $N = 172$, with $S = TP + FN = 108$ (“S” for “sick”) presumed COVID-19 subjects and $H = TN + FP = 64$ (“H” for “healthy”) healthy subjects. We’ll interpret the quoted 93% and 100% as the True Positive Rate and True Negative Rate. So we have 4 equations in the 4 unknowns $TP$, $TN$, $FP$, $FN$:

\[\begin{align*} TP + FN &= S \\ TN + FP &= H \\ \mbox{TPR} &= \frac{TP}{TP + FN} \\ \mbox{TNR} &= \frac{TN}{TN + FP} \end{align*}\]Pretty obviously, the solution is:

\[\begin{alignat*}{4} TP &= \mbox{TPR} \cdot S &&= 0.93 \times 108 &&= 100.44 \\ TN &= \mbox{TNR} \cdot H &&= 1.00 \times 64 &&= 64 \\ FN &= (1 - \mbox{TPR}) \cdot S &&= (1.00 - 0.93) \times 108 &&= 7.56 \\ FP &= (1 - \mbox{TNR}) \cdot H &&= (1.0 - 1.0) \times 64 &&= 0 \end{alignat*}\]Now we’ve reconstructed the counts in the trial. Approximately: almost certainly we should round 100.44 to 100 and 7.56 to 8, because humans usually come in integer quantities (conjoined twins notwithstanding). That would amount to a TPR of 92.59% instead the 93% to which they sensibly rounded. Armed with that, we can compute the Positive Predictive Value and the Negative Predictive Value:

\[\begin{alignat*}{5} \mbox{PPV} &= \frac{TP}{TP + FP} &&= \frac{100.44}{100.44 + 0} &&= 100.0\% \\ \mbox{NPV} &= \frac{TN}{TN + FN} &&= \frac{64}{64 + 7.56} &&= 89.4\% \end{alignat*}\]Result:

- If the test is positive: be 100% (ish) sure we have a COVID-19 case.

- If the test is negative: be 89.4% sure we do not have a COVID-19 case (which is pretty good, as these things go).

Grumble: Why couldn’t they just quote the PPV and NPV on the box, and not make me go through all that?! This is the sort of thing that makes a grizzled old statistician grumpy.

Now… how would one go about putting confidence limits on the PPV and NPV? Hmm…

Ding! The kitchen timer went off. No time for confidence limits; time now to read the test.

The result

Ultimately, as you can see here, the test was negative: only the C bar showed up (i.e.,

the test worked), and not a trace of the T bar (i.e., no viral antigens detected). Big

sigh of relief! (Exactly 89.4% of the biggest possible sigh of relief, as you will

understand if by some happy accident you chanced to wade through the math above.)

Ultimately, as you can see here, the test was negative: only the C bar showed up (i.e.,

the test worked), and not a trace of the T bar (i.e., no viral antigens detected). Big

sigh of relief! (Exactly 89.4% of the biggest possible sigh of relief, as you will

understand if by some happy accident you chanced to wade through the math above.)

We also breathed sighs of relief on behalf of the elderly people visited this week by the Weekend Editrix and her minions. At least none of them will inadvertently get sick from the kindness of the Weekend Editrix, and her minions who visit them.

The Weekend Conclusion

- In the US, our medical system in general is cruel, and our COVID-19 testing system is laughable: difficult of access and low availability to all if money were lacking.

- People really don’t understand the difference between a False Positive Rate and a False Discovery Rate. Or appreciate that what they really want to know the Positive Predictive Value and the Negative Predictive Value. Tsk! (Admittedly, I have niche tastes.)

- But after all that, we’re still relieved to be 89.4% sure we’re COVID-19 free here at Chez Weekend.

Addendum 2021-Dec-22: XKCD shows us all How It Is Done

A member of the Weekend Commentariat (email division) wishes to point out that Randall Munroe, the chaotic good genius behind the wonderfully perverse XKCD, has shown us all The Correct Way to interpret COVID-19 rapid antigen tests:

(Read the mouseover text. I want some of that anti-coronavirus COVID+19 stuff!)

Notes & References

1: JE Shuren, “Coronavirus (COVID-19) Update: FDA Authorizes Additional OTC Home Test to Increase Access to Rapid Testing for Consumers”, FDA.gov, 2021-Oct-04. ↩

2: ACON Laboratories Staff, “Flowflex COVID-19 Antigen Home Test Package Insert”, ACON Labs, retrieved 2021-Dec-10. ↩

Gestae Commentaria

Have you or the manufacturers given any thought to how much of the false negatives reported might be due to faulty sample collection? Perhaps there are so many other possible reasons for false negatives that this is a minor concern? (such as low viral load may result in higher false negs)

I know too many people who would be squeamish about sticking a swab up their nose or swabbing the back of the throat to do the test properly. If the study was closely supervised to ensure high quality sampling, then that minimizes the concern over the accuracy of the vendor’s claims (btw Acon PROBABLY used 93% for marketing (as it is better than 89%). In general, people don’t take time to understand statistics.

Bienvenue à la Revolution, Citoyen Jean! (Also, of course: welcome to the Weekend Commentariat.)

I’m not privy to what goes on in diagnostic test development companies, though I’ve been involved in the research behind some of them (deriving ab initio predictive response biomarkers for cancer drug candidates, mostly). People do indeed worry about consumer-grade tests, where the persons administering the test may not quite know what they’re doing. “Pilot error” is a real thing!

So if you have a positive pregnancy test, when you go to the doctor you probably get another pregnancy test, done in their hands. I don’t know if that’s also the case with COVID-19, but I’ll bet if you show up with a positive antigen test your doc will prescribe a PCR test. (Then maybe a prescription for paxlovid, and heartfelt “best wishes” at finding a pharmacy to fill it.)

Amen to that.

I’m certain some people at Acon understand the difference between TPR = 93% versus PPV = 89.4%, and that the latter is more useful. I’m equally certain their marketing department regards those people as nerds who must be grudgingly tolerated, but to whom they need not actually listen.

It would help if they just said: hey, if the test comes up positive it’s almost certain you have COVID-19; otherwise if the test comes up negative it’s only about an 11% chance you have COVID-19. Retest negative again in 24hr and you can knock that down to about 1%.

I hope most people could understand that.

As it says on the quotes page for this blog:

Alas, that was a century ago.

Later: Also worth noting from 3 days ago is an article at Your Local Epidemiologist with five quantitative studies of antigen tests in the wild, i.e., as self-administered by ordinary people in the context of Omicron. Still looks pretty good, and that’s a hopeful sign!

Relieved to hear the test was negative!

But doesn’t your 89.4% calculation only go through if we assume the base rate of illness relevant to Editrix (aka the prior Pr (Reality+)) is the same as the base rate of sick people in the trial for the test?

That seems unlikely to be exactly right as the value for Pr(Reality+). I would think the actual correct prior would be something like the attack rate of the virus for a contact like the one that prompted the test. (I’d expect that to be quite low–the within-household attack rate measured from contact tracing was about 20% when I looked it up pre-vaccination, this should be raised due to Delta no doubt, but also lowered due to vaccination, and this wasn’t a within-household contact… so on the whole I would think the prior ought to be 20% or lower.)

Which is good news, by the way. It would mean NPV=TN/(TN - FN) is something more like 1/(1-0.2*0.07)… since 0.2*0.07 is about the proportion of false negatives you would expect with a base rate of 20%… which is much better than 89.4%!

And of course the false negative probability isn’t really literally 100.0%; that should be modified down depending on the error bars from the trial, whatever those were.

Oops, some sign errors there and the multiplication symbol didn’t come through.

Correction:

NPV=TN/(TN + FN)

= 1/(1+0.2x0.07)= 98.6%

First things first, Dave: welcome to the commentariat, and thanks for the kind wishes!

No, that’s not quite how this works.

You are thinking, possibly, of the vaccine trials? There, the efficacy readout was at bottom a comparison between the rate of infection in the vaccine arm vs the base rate of infection in the general population as represented by the control arm. So knowing the base rate, as measured by the controls, was essential.

Here, though, we’re not comparing rates, just the status of the next sample. In the assay trial, we want to know how often the assay is right and wrong (and which way it’s wrong), when presented with a number of people of known COVID-19 status. In this case, the assay was tested on 108 sick people and 64 healthy people. The background rate in the population doesn’t matter so much; we assume that all COVID-19 patients are similar enough at the molecular level that the assay will come out the same (plus noise, which models the error rate).

Interestingly, that will not remain the case over time: COVID-19 patients will change at the molecular level due to new viral variants. So the assays are usually optimized to be sensitive to several spots on the relevant viral proteins. That way the virus must mutate massively to evade detection… which, alas, seems to be what Omicron has done. That’s the source of SGTF: PCR tests measure several genes, but if the primers for the spike gene (S) fail due to massive mutations in Omicron and the other genes show up, then you’re looking at Omicron.

But I’m glad you raised the issue. It sure seems like some background rate should matter, doesn’t it? Your question parallels a criticism lobbed in my general direction by another colleague who is unaccountably shy to use the comment system: he says I’ve invoked Bayes Rule talismanically, without explicitly using it.

While that’s a bit of an extreme view, let me here use Bayes explicitly for him and also so you can see what the relevant background rate is.

We want to calculate the Positive Predictive Value, when what we know are the assay parameters like TPR, FNR, TNR, FPR (see graphic above):

\[\begin{align*} PPV &= \Pr(R+ | T+) \\ &= \frac{ \Pr(T+ | R+) \times \Pr(R+)}{ \Pr(T+) } \\ &= \frac{ \Pr(T+ | R+) \times \Pr(R+)}{ \Pr(T+ | R+) \times \Pr(R+) + \Pr(T+ | R-) \times \Pr(R-) } \\ &= \frac{TPR \times \Pr(R+)}{ TPR \times \Pr(R+) + FPR \times \Pr(R-) } \end{align*}\]Looking at the 2x2 table above, or even the definitions written in the margin, we see the quantities we need:

\[\begin{align*} TPR &= \frac{TP}{TP + FN} \\ FPR &= \frac{FP}{FP + TN} \\ \Pr(R+) &= \frac{TP + FN}{N} \\ \Pr(R-) &= \frac{FP + TN}{N} \end{align*}\]Here the $\Pr(R\pm)$ is the rate in the test population, not the population at large. Unlike the vaccine trial, where we want the control arm to mimic the unvaccinated population, here we do not. We just want a known number of COVID-19 patients and healthy patients.

So plug that into the Bayes equation above, to obtain:

\[\begin{align*} \Pr(T+ | R+) &= \frac{\frac{TP}{TP+FN} \times \frac{TP + FN}{N}}{\frac{TP}{TP+FN} \times \frac{TP + FN}{N} + \frac{FP}{FP+TN} \times \frac{FP+TN}{N}} \\ &= \frac{TP}{TP+FP} \end{align*}\]… which is what we got from direct inspection of the first row of the 2x2 table.

Does that help?

You are of course completely correct here. In my defense, I will merely mention that the test took only 15 minutes and I couldn’t get to the confidence limits that fast. :-) I think the result might Beta-distributed and I could get confidence limits from that, but I’d have to think it over to be sure.

Thanks! It’s been a pleasure to read your very informative posts on the state of the evidence and the regulatory agencies.

On the question at hand: I see how that gives the correct posterior probability of infection for someone who was a participant in the trial, but that’s the circumstance in which the test is being used here.

Is the conclusion you’re drawing that anyone who takes the FlowFlex test and gets a negative result should emerge with an 89.4% probability that they’re not infected? Surely that’s generalizing way too far… For example, if an astronaut near the end of a three-month International Space Station mission takes the test, their conclusion should be 99.9-some-% that they aren’t infected. Surely the same goes even if the test is negative!

The example is meant to illustrate that the posterior probability should be highly dependent on things like the number and closeness of contacts for the individual taking the test.

Would a second test with the same rapid test result in an improved NPV to the degree that simple mathematical extrapolation would imply?

0.106 squared equals 0.011, which gives an NPV of 98.9%.

But, this might not work if there is some issue that causes repetitive errors in the particular test (eg, if a specific viral strain is difficult for this test to identify). Such an issue would mean that the errors in the test are not simply random. It might be that for 5% of Covid viral strains the test always fails to recognize it, and for the remaining 95% of samples it randomly fails to recognize another 5.6%.

Welcome to the commentariat, Prism!

Oddly enough, a medical friend read this post and reminded me that while the gold standard is a PCR test, the silver standard is to use a lateral flow test like this one, but insisting on 2 negative results 24hr apart.

So your suggestion turns out to be the standard of practice.

If, as you worked out, the 2 consecutive tests were independent events (probabilistically), then the NOV of the pair would be the square of the NOV of a single test: 10.6% squared is about 1%.

However, as you also began working out, it’s unlikely the tests are completely independent. They might share a common failure mode, or share a common blindness to a viral variant, or share a common ham-fisted blunderer administering the test (me). I don’t know quite how to quantify that, so I might just say the chance of COVID-19 given a 2nd negative test would be lower than 10%, but bigger than 1%.