On the Reproducibility of Twitter Polls

Tagged:COVID

/

MathInTheNews

/

R

/

Statistics

Everybody knows Twitter polls are… questionable. But are they reproducible?

Twitter Polls

There are all sorts of problems with Twitter polls:

- Huge sample bias, since the respondents self-select from among those who saw the poll. Twitter respondents tell us little about the public in general.

- Differences in phrasing that look minor to those unbaptised in sampling survey design can hugely affect the results.

- Twitter is global, so effects across nations, ethnicities, and cultures are all mixed together in a way that is not measured.

… and about 17 other things. (Don’t tempt me to go full Cyrano de Bergerac on this.)

A Natural Experiment

Over at Don’t Worry About the Vase, Zvi mused about the

reliability and reproducibility of Twitter polls, and conducts what almost amounts to a

natural experiment. [1] In a

natural experiment, we expose

subjects to the variables under our control, but also to the farrago of all the other

factors not under our control. Like, say, a Twitter poll.

Over at Don’t Worry About the Vase, Zvi mused about the

reliability and reproducibility of Twitter polls, and conducts what almost amounts to a

natural experiment. [1] In a

natural experiment, we expose

subjects to the variables under our control, but also to the farrago of all the other

factors not under our control. Like, say, a Twitter poll.

The original subject was a couple Twitter polls about people’s psychological health in the pandemic. The results differed slightly, but the questions also differed, as did presumably the set of followers of the two instigators. Many other details were different, on top of the huge sample bias and other problems. Fair enough.

This led to some discussion about whether this is “weak evidence” (causes a small Bayesian update, but in the correct direction), or “bad evidence” (causes an update of any size, but in the wrong direction). That’s actually a startlingly good summary of the situation: bad evidence has biases that cover up the truth in a non-recoverable way and then does damage to your cognitive model of the world.

Amusingly:

-

When a philosopher showed up in the conversation, Zvi summarized the situation as:

Getting into a Socratic dialog with a Socratic philosopher, and letting them play the role of Socrates. Classic blunder.

… which is just about perfect. (It happens I have a friend who is a professor of the classics, specializing in ancient Greek, who thinks it’s perfect too.)

-

They also went full meta, with a Twitter poll on the merits of Twitter polls. I’m sure Doug Hofstadter would approve. Heaven knows I thought it was hilarious, but then I’m a bit weird.

The almost-natural experiment was to repeat someone else’s poll, word for word, and collect proportions to compare:

- Q: What’s your experience of people in general since the onset of the pandemic?

- A: More stable, about the same as before, or less stable.

For a variety of reasons, Zvi snapshotted his poll at 2 time points, for comparison with Patrick Collison’s survey a week ago.

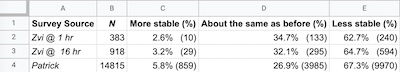

The data are shown here, both as the percentages reported and the counts inferred from

that. The 3 polls certainly look very close, based on percentage breakdowns. But can

we make that quantitative?

The data are shown here, both as the percentages reported and the counts inferred from

that. The 3 polls certainly look very close, based on percentage breakdowns. But can

we make that quantitative?

Yes, we can.

These are 3 results drawn from a multinomial distribution (like binomial, but in this case with 3 outcomes). We want to know if they’re all from the same distribution or not. (More precisely, we’ll do pairwise comparison of each of Zvi’s snapshots with Patrick’s, but won’t bother comparing Zvi’s 2 snapshots.) We’re not asking if any of these are accurate in any way, just whether or not they’re the same.

Just so you’ll know it’s not me spitballing the test here, we’ll follow the guidance

offered by the Office of Evaluation Services of the General Services Administration of

the US government. [2] Not perfect, but a source many (must)

take seriously.

Just so you’ll know it’s not me spitballing the test here, we’ll follow the guidance

offered by the Office of Evaluation Services of the General Services Administration of

the US government. [2] Not perfect, but a source many (must)

take seriously.

The recommendation is a $\chi^2$ test, which looks at the sums of squares of differences of the counts and asks how probable it is to see differences as large as we do. This is a two-way test, as we’re asking if 2 samples are random draws from the same underlying (unknown) distribution.

> counts <- matrix(c(10, 133, 240, 29, 295, 594, 859, 3985, 9970), byrow = TRUE, nrow = 3, dimnames = list(c("Zvi1hr", "Zvi16hr", "Patrick"), c("More stable", "Same", "Less Stable"))); counts

More stable Same Less Stable

Zvi1hr 10 133 240

Zvi16hr 29 295 594

Patrick 859 3985 9970

> chisq.test(counts[c("Zvi1hr", "Patrick"), ])

Pearson's Chi-squared test

data: counts[c("Zvi1hr", "Patrick"), ]

X-squared = 16.267, df = 2, p-value = 0.0002935

> chisq.test(counts[c("Zvi16hr", "Patrick"), ])

Pearson's Chi-squared test

data: counts[c("Zvi16hr", "Patrick"), ]

X-squared = 20.244, df = 2, p-value = 4.018e-05

Now that’s interesting! Both $p$-values are very statistically significant, in spite of the very small differences in observed proportions. We can check this by doing a Fisher exact test, and we get similar results.

How can that be? Well, Patrick’s survey has 38x and 16x more samples than Zvi’s snapshots, respectively. When you have a giant pile of data, it’s easy to make small differences look significant. Also, when the sample sizes are unbalanced like this, you bias in favor of the larger class (Patrick’s).

The usual response here is something like case-control sampling, where you down-sample the large group multiple times to get more representative cohorts. We can’t do that here, since we don’t have individual response data. But we can assume the subsample of Patrick’s respondents would have the same proportions, and just scale down his counts to match Zvi’s to see what happens. The row sums of the downsampled tables show that we’ve synthesized a dataset in which Zvi and Patrick had the same number of respondents:

> downsample1 <- t(transform(t(counts[c("Zvi1hr", "Patrick"), ]), Patrick = round(Patrick / 38.68))); downsample1

More stable Same Less Stable

Zvi1hr 10 133 240

Patrick 22 103 258

> chisq.test(downsample1)

Pearson's Chi-squared test

data: downsample1

X-squared = 8.9642, df = 2, p-value = 0.01131

> downsample2 <- t(transform(t(counts[c("Zvi16hr", "Patrick"), ]), Patrick = round(Patrick / 16.14))); downsample2

More stable Same Less Stable

Zvi16hr 29 295 594

Patrick 53 247 618

> chisq.test(downsample2)

Pearson's Chi-squared test

data: downsample2

X-squared = 11.751, df = 2, p-value = 0.002808

Still statistically significant, though not at such eye-watering levels as at first.

What’s happening? Basically, there’s a real difference: Patrick found more results at the “more stable” and “less stable” ends, with fewer in the “about the same” bucket (27% vs 32% - 35%). You can see this from the proportion data above, but I was a bit surprised to see it statistically significant.

But: does it matter? That is to say, we’ve found a “real” effect here that is statistically significant (unlikely to be simple sample fluctuations); is it big enough that we should care? That is, is the effect size large enough to move us to make a different decision based on the two datasets?

Probably not! Zvi’s and Patrick’s followers are different, but oh-so-very slightly different that nobody should update much on that fact.

A Bayesian Alternative

For those of you about to poo-poo this analysis because it is frequentist – and you know who you are :-) – there is a Bayesian version.

In the case of binomially distributed data, the Bayesian conjugate distribution that gives your posterior over the $p$ parameter is a Beta distribution.

For multinomially distributed data like this, the Bayesian conjugate distribution that gives your posterior over the vector of $p_i$ parameters is the Dirichlet distribution.

Almost nobody does this. (Ok, Ed Jaynes, because he was Just That Way. But I can’t think of anybody else.) I’ve only done it once in my career. I was happy with the mathematical purity. But I was sad for pragmatic reasons: I don’t think I ever adequately explained it well enough to the client so they would do what their experiment told them to do. They just glazed over at “all the math stuff”. Sometimes there are limits to what you can do relate to social engineering more than anything else.

There are even more alternatives in the non-parametric space, with varying degrees of fancy pants: Kolmogorov-Smirnov test, Kulback-Leibler divergence, and so on. We’ll content ourselves with the multinomial test above.

The Weekend Conclusion

Zvi and Patrick’s survey results were different, in that Patrick found statistically significantly fewer respondents saying “about the same”. However, the effect size was small and should probably be ignored.

Notes & References

1: Zvi Mowshowitz, “Twitter Polls: Evidence is Evidence”, Don’t Worry About the Vase blog, 2022-Sep-20. ↩

2: US government OES & GSA, “Guidance on Using Multinomial Tests for Differences in Distribution”, OES publications, retrieved 2022-Sep-20. ↩

Gestae Commentaria

Comments for this post are closed pending repair of the comment system, but the Email/Twitter/Mastodon icons at page-top always work.